How Does AI Work Technically

Ages 6+

Objective:

Understand what's behind the scence of AI and machine learning

At its core, modern AI (specifically Large Language Models like the ones we use today) is essentially a highly advanced prediction machine.

Here is a breakdown of how AI works technically, explained through analogies that make the complex concepts accessible.

1. Rule-Based Automation

The Rigid Calculator

Think of rule-based automation like a recipe book or a flowchart. A human programmer writes down every single rule the computer must follow.

- How it works: "If the user types 'Hello,' then reply with 'Hi there!'"

- The Limit: The computer is only as smart as the rules written for it. If you type "Greetings," and the programmer didn't write a rule for that specific word, the computer gets stuck.

- Analogy: A digital calculator. It follows strict mathematical rules ($2 + 2$ always equals $4$), but it can't "invent" a new way to do math or understand the "vibe" of a number.

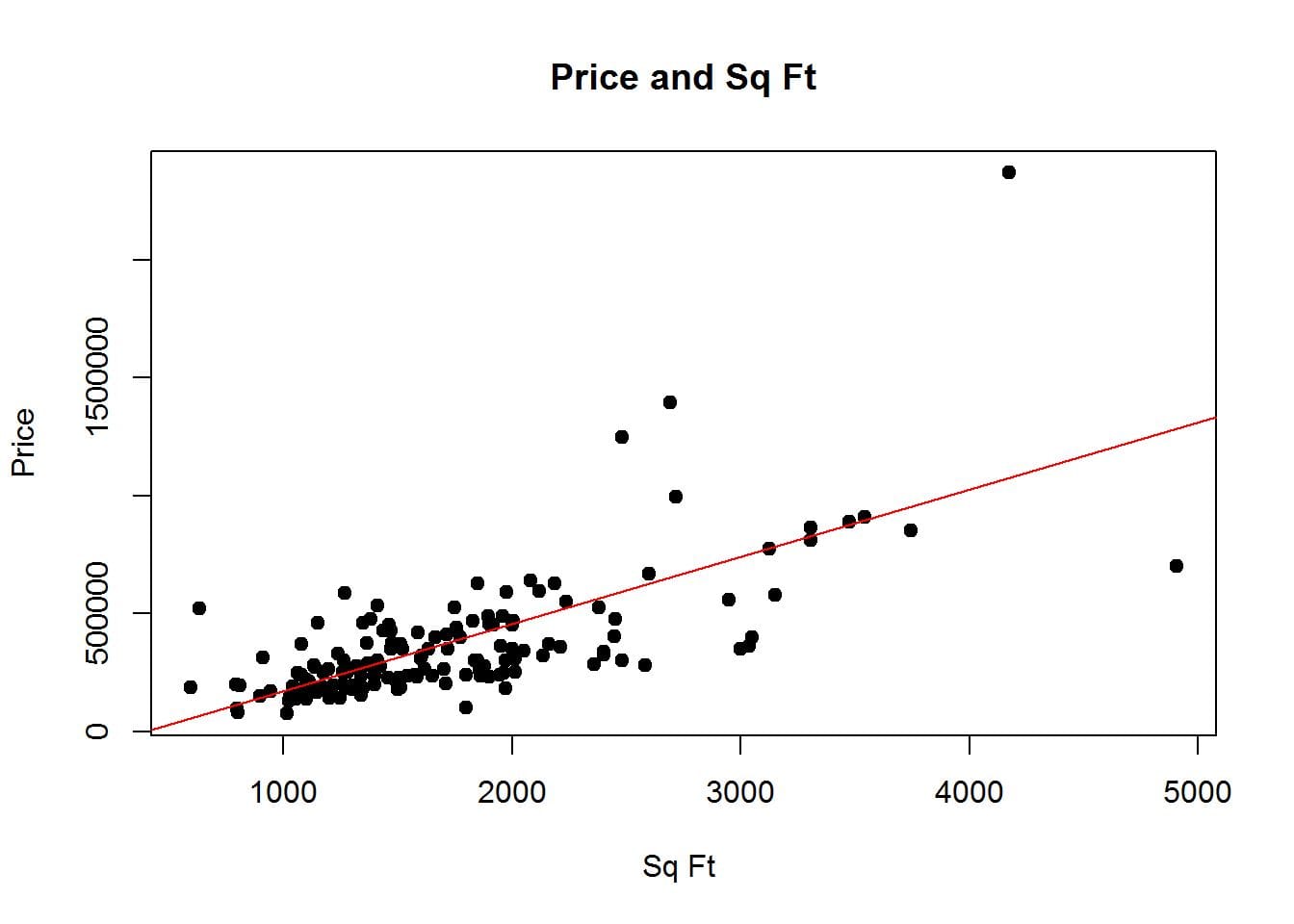

The "Line of Best Fit"

With Linear Regression, we stop giving the computer rules and start giving it examples (data).

Above is a graph where the X-axis is Size and the Y-axis is Price. We plot 100 actual sales from the last month. The dots are scattered everywhere.

- The Goal: The machine’s job is to draw a single straight line that gets as close to as many dots as possible.

- The "Learning": The machine starts with a random line. It calculates the "Error" (how far the line is from the actual dots). It then tilts and moves the line slightly to reduce that error.

- The Result: Eventually, it finds the "best" line. Now, when you give it a size it has never seen before, it just looks at where that size hits the line to give you a price.

Parents note:

Linear regression is the simplest type of machine learning, it learns from past data to predict future prices.

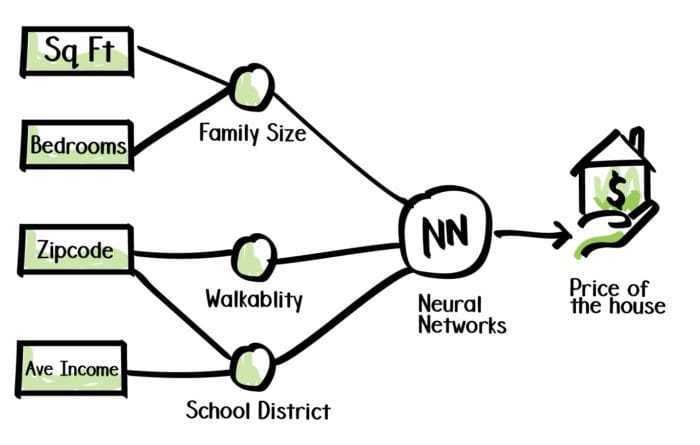

2. Learning from Factors (Neurons)

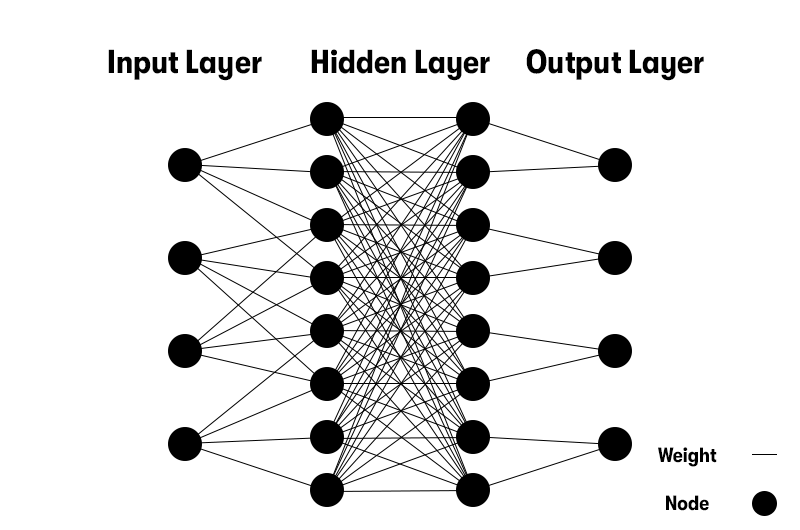

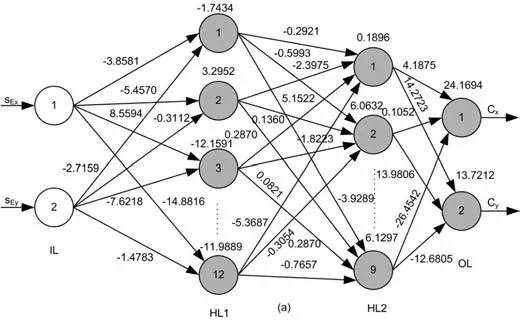

However, real estate isn't just about size. It’s more about Size, Age, and Location. In a "Neural Network," each of these factors is like a Neuron.

- Input Neurons: Size, Room number, Location, Age, Income etc.

- Weights (The Nodes): The machine assigns a "weight" to each. Maybe it learns that Location is 10x more important than Owner income.

- The Hidden Layer: This is where the magic happens. The machine combines these factors. For example, it might learn a complex rule: "If the house is old AND in a premium location, the price actually goes UP (Historical Value)."

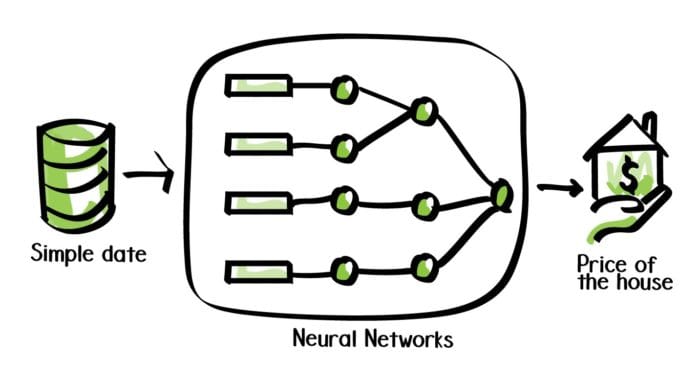

A "Deep" Neural Network is simply thousands of these mini-regressions stacked on top of each other. Instead of one simple line, it creates a complex, multi-dimensional web of patterns.

Deep Dive: How Machine Actually Works (Trial and Error)

Think of it like a New Employee

Imagine you hire someone with zero experience. On day one, they make random guesses. But you give them feedback every time they're wrong. Over time, they adjust how they think — and get better.

A neural network does exactly this. Mechanically.

The Teacher: Labeled Data

You hand the machine thousands of examples with the answers already attached:

Photo of a cat → "this is a cat"

Photo of a dog → "this is a dog"

Photo of a cat → "this is a cat"The machine doesn't know what a cat is yet. But it has the answer key each.

The Nodes Inside the Machine

Inside the network are thousands of nodes (weights).

Each node controls how much attention the machine pays to something — the shape of the ears, the length of the snout, the size of the eyes.

At the start, all nodes are set randomly. The machine is essentially guessing.

The Learning Loop

This happens millions of times:

1. LOOK → machine sees a labeled photo

2. GUESS → it makes a prediction with its current node settings

"I think this is a dog" (wrong — it's a cat)

3. COMPARE → it checks its guess against the label

"I was wrong. How wrong? Pretty wrong."

4. ADJUST → every node that contributed to the wrong answer

gets turned slightly in the right directionThat last step is the magic. The machine knows which nodes caused the mistake and nudges them — just a tiny bit — toward being more correct.

What Changes Over Time

Day 1 — nodes are random. Gets it wrong constantly.

Day 2 — nodes slightly better. Still mostly wrong.

...

Month 1 — nodes have settled. Gets it right 95% of the time.The nodes don't change randomly. They change based on their contribution to the mistake. Nodes that mattered a lot to the wrong answer get adjusted more. Nodes that barely contributed get adjusted less.

Why It Works on New Data

Once training is done, the nodes are frozen.

Now when the machine sees a photo it has never seen before — no label, no answer key — it just runs it through those finely-tuned nodes and produces an answer.

It works because the nodes didn't memorize specific photos. They learned the underlying pattern — what makes a cat a cat, across thousands of examples.

Summary

The machine starts blind, makes guesses, gets told how wrong it is, and slightly adjusts its internal settings after every single example. Do that enough times with enough labeled data, and the settings naturally shape themselves into something that understands the world.

Therefore, instead of giving the computer rules, we give it data. This is like showing a child thousands of pictures of cats instead of trying to explain the "rules" of what a cat looks like (pointy ears, whiskers, tail).

- Machine Learning: The computer looks at millions of examples and notices that when "A" happens, "B" usually follows.

- Generating Content: When you ask an AI to write a story, it isn't following a "storytelling rulebook." It is looking at its internal map of patterns to see which words usually go together to form a plot, a character, or a joke.